Advertisement

Innovator Spotlight

Why This Woman Wants Our AI To Understand Our Feelings

As anyone who's tried to have a deep conversation with Siri can attest, artificial intelligence doesn't really have a lot of emotion. Our tech is full of IQ, but little EQ.

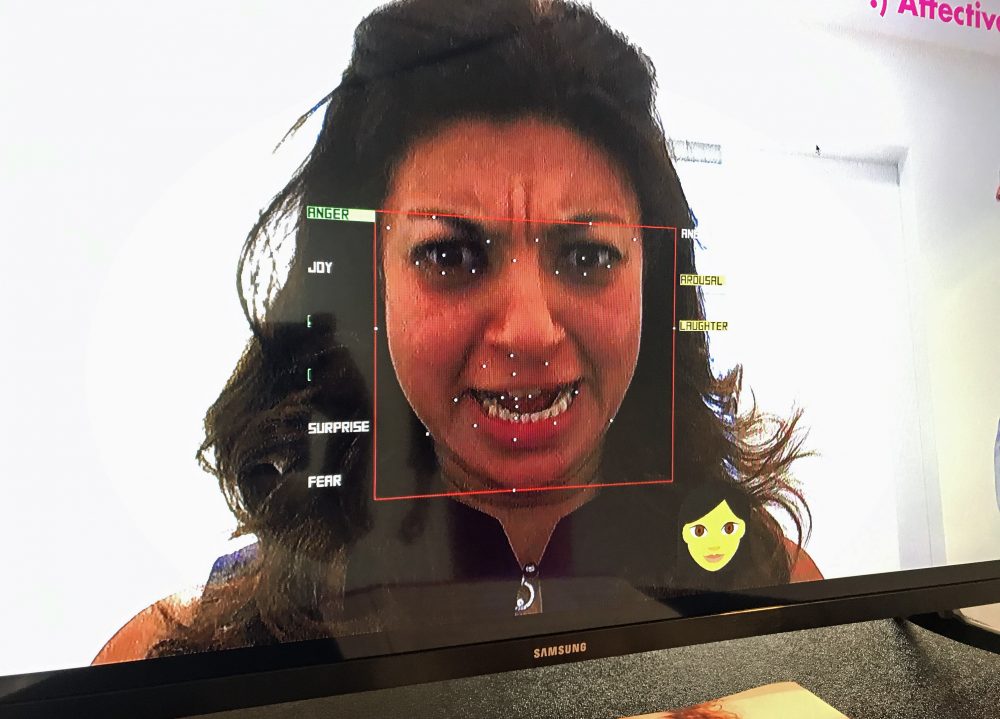

Rana el Kaliouby, the CEO and co-founder of Affectiva, a company that spun out of MIT in 2009, is working on technology to change that — and essentially bring more emotion into AI platforms.

El Kaliouby started her work on "emotion AI" with MIT professor Rosalind Picard. Together, they built tech that can analyze subtle facial expressions and make a judgment call on a person's emotional response to a situation.

But, el Kaliouby says her real goal is to create an AI platform that can understand emotions through all of our natural human tendencies: facial expressions, gestures and tone of voice. Earlier this month, Affectiva announced that it's created the capability to measure some elementary emotion in our speech, like anger or laughter.

BostonomiX caught up with el Kaliouby to talk about the tech she's working on and why she thinks it has the potential to revolutionize how we relate to our devices.

What 'Emotion AI' Means. And Why She's Obsessed With It

When el Kaliouby was working on her doctorate at Cambridge University, she realized she was spending more time with her laptop than any human being. It was her portal to communicate with her family back in Egypt, and yet, that little device couldn't understand her.

"I was intrigued with how much time we spend with our technologies, yet our devices have absolutely no clue how we're feeling," she said. "They know where we are, they know who we are, they know what we're doing, but they don't know how we're feeling."

El Kaliouby says when you look at humans, our emotional intelligence is fundamental to our overall intelligence.

She thinks the same is true for tech, and she envisions a world where our devices can sense and respond to our emotions in real time.

So, for example, you ask Amazon Alexa to find the weather in Boston, but she thinks you said Austin. You ask again in a more annoyed tone of voice, she gets the hint — she made a mistake and you're frustrated -- and she can learn from that behavior and try a different tactic. In other words, you can give your devices positive and negative feedback to change what they're doing.

Lost In Translation?

El Kaliouby says the company's facial expression tech is often used by market research firms to test how consumers respond to ads by some 1,400 brands in 87 countries around the world. And that means it needs to understand cultural nuances.

"We found that for instance, Brazilians are more expressive than East Asians, so we've had to tune our classifiers, so that we can detect the very subtle emotions we see in Japan, for instance," she explained.

They've also detected gender differences in facial expressions.

"Not surprisingly, women, they smile more, they express more positive emotion," el Kaliouby said. But she quickly explained that's culturally specific. "In the U.S., women smile more than men. In the U.K., there's no difference between men and women."

Why She Doesn't Want This Tech Used For National Security

When Affectiva spun out of MIT, the company decided on a few ground rules. One of them was that people would have "consent" to using this technology. El Kaliouby says that was "non-negotiable."

"Emotion data is very personal," she said. "And we feel that it's very important that people know or consent before their data is collected and analyzed."

So, to date, they've steered clear of surveillance and security because often people don't know when they're being monitored. But el Kaliouby said maybe one day they'll reconsider as safety becomes more of a concern.