Advertisement

As Flu Ebbs, Google Tracker Looking Way, Way Too High

Just a little follow-up here: Let the historical record show that even though Keith Winstein does not even work for The Wall Street Journal anymore — he's now a computer science grad student at MIT — he still seems to have scooped the country on "Google Flu Trends."

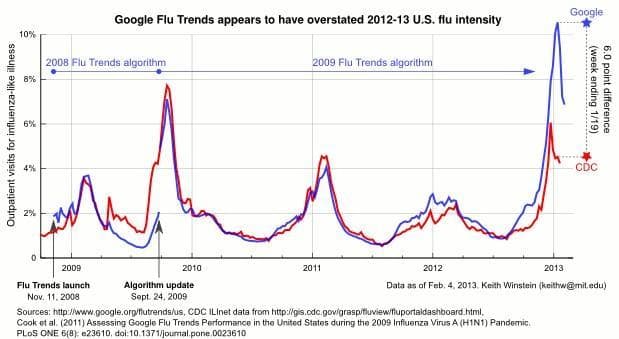

Keith pointed out back in early January, when "Worst flu season ever!" hype was all the rage, that the Google Flu Trends estimates cited by alarmists seemed dramatically higher than the CDC's official estimates. (See this earlier post.) He has now generated the graph above comparing the estimates of the much-celebrated Google Flu Trends in blue with the gold-standard data from the CDC in red. He puts a staid academic title on it, but personally I think I'd headline it, "Wow, Google Flu Trends Is Looking Way, Way Too High."

He quantifies below, noting among other things that in New England, Google appears to have been off by a non-trivial factor of five:

Looking at the week where Google Flu Trends peaked nationally (1/13-1/19), in eight of the ten HHS regions, Google Flu Trends' estimate is more than double the CDC's figure for the corresponding week. The better regions were region 7 and 8, but even there the disagreement is very large (1.85 percentage points for region 7, 3.5 percentage points for region 8). On the bad side, in New England where I am (HHS region 1), Google's estimate was 14.2%, compared with a CDC value of 2.9% (off by almost 5x). In aggregate the root mean squared error for that week is more than 0.073, compared with figures in the PLoS ONE paper of less than 0.008.

I asked Kelly Mason of Google.org’s Global Communications and Public Affairs team if she could cast any light on these disparities. Previously, she had emphasized that the CDC numbers were still early estimates. She emailed:

Most important thing to remember is that Flu Trends is a complementary data source to the systems run at the CDC. What worked well this season was timing of the flu season: the start and peak has shown good alignment nationally and in a number of regions. There is also value in the early signal. Flu Trends showed intense nationwide activity 1-2 weeks before the CDC reported widespread activity on their influenza activity map.

As you've noted, some regions aligned well, while others had lower numbers than our estimates. As we indicated in the Nature paper, Flu Trends performs a full model evaluation and possible model update at the end of each season. We'll be working with the CDC to help understand the areas where we didn't see as good of alignment.

I admit, it's a little tempting to gloat when the greatest Power That Is in the infosphere gets something wrong. But of course, Google Flu Trends is clearly a useful early-warning tool, and Keith Winstein's point is more that whatever the Google tracker's errors are, they may offer useful lessons to the world of Big Data.

Commenters on our previous post offered some interesting theories on the disparity, including:

Most likely problem, I say this without evidence, is that this season has seen a high convergence of things that people could or commonly do use the word "flu" to describe: Winter vomiting bug/stomach "flu" which is in reality caused by norovirus, highest incidence of whooping cough for 60 years,, several nasty colds going around including those caused by Rhinovirus or Respiratory Syncitial virus. This convergence would skew the Google search higher than the actual data coming form CDC. Just my thoughts.

If you're reporting based on search results, the results are likely to skew when enormous publicity begins to arise about flu outbreaks. The algorithm may be relatively accurate in predicting early for typical flu outbreaks, but once widespread news reportage kicks in, it would seem people would turn to Google to check symptoms or read up on the flu even if they did not have flu at that time.

No reporting or surveillance system is perfect, but each can add to the picture. Even the CDC's system has it's problem in terms of relying on testing. The tests aren't perfect, and many people do not get tested. Also, I believe some states stop testing after flu is widespread.

I'm betting on Google - you have too many layers of statistics with CDC. Knowing approximately what is happening early is better than learning exactly what happened two weeks later (and after you needed the information).

Readers, if you'd care to delve into the data, the CDC estimates are here and Google Flu Trends is here. Keith answers the question "How accurate is Google Flu Trends?" on Quora here.

I'm thinking the ultimate moral of the story may still be his comment in our first post: "This could be a cautionary tale about the perils of relying on these 'Big Data' predictive models in situations where accuracy is important."

This program aired on February 4, 2013. The audio for this program is not available.