Support WBUR

Is 'Google Flu Trends' Prescient Or Wrong?

Has Google’s much-celebrated flu estimator, Google Flu Trends, gotten a bit, shall we say, over-enthusiastic?

Last week, a friend commented to Keith Winstein, an MIT computer science graduate student and former health care reporter at The Wall Street Journal: “Whoa. This flu season seems to be the worst ever. Check out Google Flu Trends.”

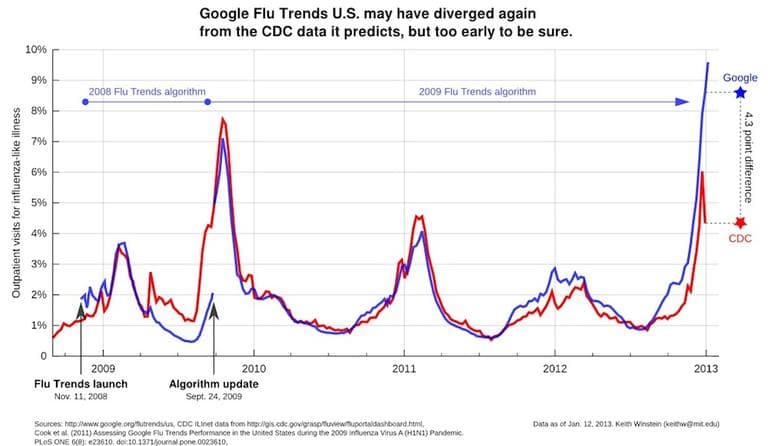

Hmmm, Winstein responded. When he checked, he saw that the official CDC numbers showed the flu getting worse, but not nearly at Google’s level. (See the graph above.) The dramatic divergence between the Google data and the official CDC numbers struck him: Was Google, he wondered, prescient or wrong?

He began to explore — as much as a heavy grad-student schedule allows — and shares his thoughts here. Our conversation, lightly edited:

I accept the caveat that these predictive algorithms are not your speciality, but still, from highly informed, casual observation, what are you seeing, in a highly preliminary sort of way?

Well, I'm certainly not an expert on the flu. The issue that’s interesting from the computer science perspective is this: Google Flu Trends launched to much fanfare in 2008 — it was even on the front page of the New York Times — with this idea that, as the head of Google.org said at the time, they could out-perform the CDC’s very expensive surveillance system, just by looking at the words that people were Googling for and running them through some statistical tools.

It’s a provocative claim and if true, it bodes well for being able to track all kinds of things that might be relevant to public health. Google has since launched Flu Trends sites for countries around the world, and a dengue fever site.

So this is an interesting idea, that you could do public health surveillance and out-perform the public health authorities [which use lab tests and reports from ‘sentinel’ medical sites] just by looking at what people were searching for.

'It is often a problem with computers that they only tell us things we already know.'

Google was very clear that it wouldn’t replace the CDC, but they have said they would out-perform the CDC. And because they’re about 10 days earlier than the CDC, they might be able to save lives by directing anti-viral drugs and vaccines to afflicted regions.

And their initial paper in the journal Nature said the Google Flu Trends predictions were 97% accurate...

That was astounding. However, it is often a problem with computers that they only tell us things we already know. When you give a computer something unexpected, it does not handle it as well as a person would.

Shortly after that report of 97% accuracy, we had that unexpected swine flu, which was a different time of year from the normal flu season, and it was different symptoms from normal, and so Google’s site didn’t work very well.

And the accuracy went down to 20-something percent?

To a 29 percent correlation, and it had just been 97 percent. So it was not accurate. And what Google is predicting is not the most important measure of flu intensity. What they predict is the easiest measure, which is the percentage of people who go to the doctor and have an “influenza-like illness.” You can imagine that’s related to people who search for things like fever on the Internet. But generally what public health agencies consider more important are measurements on lab tests to determine who actually has the flu.

Google had tried and so far has not been successful at predicting the real flu. This is another illustration of how computers can tell us things that are not always what we want to know.

In 2009, Google retooled their algorithm, and did what they called their first annual update to correct the under-estimate they had during swine flu. They brought the accuracy back up again, based on new evidence about what people searched for during swine flu. And that was the last annual update, in the fall of 2009. They say further annual updates have not been necessary.

And now we are in early 2013, and they’re predicting super-high levels. The CDC reported Friday [Jan. 11] that for the week of December 30, 2012, through January 5, 2013, 4.3% of doctor visits were by patients with influenza like illness, down from 5.6% the previous week. By contrast, on Jan. 6 Google finalized its prediction for the same statistic at 8.6%, up from 7.9% the previous week. This difference is larger than has ever occurred before. The current Google estimate (for the week of 1/6) is 9.6%, with no sign of a decline yet.

So what do you think is going on, that they’re so different?

It is too soon to tell whether Google is wrong or just prescient. because both Google and the CDC’s numbers have been going up rapidly. It’s true that Google has been high, but maybe they’re just early. If next week the CDC says, ‘Hey, flu just went up to 9 percent,” we’ll say Google was great, they were early, they gave good warnings.

'This could be a cautionary tale about the perils of relying on these "Big Data" predictive models in situations where accuracy is important.'

One person at Google said in an email that because this is such an early flu season, they suspect people’s behavior going to the doctor around the week of Christmas might be different. They think the worried well, people who are ultimately not sick but just worried about it, are going to be less likely to go to the doctor over Christmas, so though they might search for symptoms they won’t go to the doctor, and that might explain why the search numbers are high but the actual doctor numbers are lower.

But the actual virological numbers are even lower, and Google has never trained the algorithm on a Christmas flu season. So its not something the computer would necessarily know to expect.

Another possibility is, just as the 2008 algorithm under-estimated the 2009 flu, the retooled 2009 algorithm is overestimating the 2012-2013 flu. It will be hard to render a definitive judgment until we have the benefit of hindsight. But depending on how it shakes out, this could be a cautionary tale about the perils of relying on these "Big Data" predictive models in situations where accuracy is important.

We plan a follow-up as we get more information, and we asked Google for comment. In an email, Kelly Mason of Google.org's Global Communications and Public Affairs team, responded:

I think the most important point is that data is still coming in, with some regions reporting flu activity more quickly than others. (The disclaimer the CDC uses is below). Basically - it's still early.

In past years, CDC reports are updated as new information comes in. We validate the FluTrends model each year. Since a 2009 update, we've seen the model perform well each flu season with no additional updates required. If you have more specific questions, please do let me know.

From the CDC:

"As a result of the end of year holidays and elevated influenza activity, some sites may be experiencing longer than normal reporting delays and data in previous weeks are likely to change as additional reports are received."

http://www.cdc.gov/flu/weekly/

Readers, thoughts? Anybody placing any bets on whose estimates will prove most accurate?

(Updated at 3:06 p.m. with Google comment. Updated 6:20, changing Google flu "predictor" to "estimator." )

This program aired on January 13, 2013. The audio for this program is not available.