Support WBUR

Review

Documentary 'Coded Bias' Unmasks The Racism Of Artificial Intelligence

In most American cities it’s fairly common for surveillance cameras to hover above busy intersections and perch behind cash registers. For a while, one of my neighbors had a few peering out of his upstairs windows. And while we might know a camera’s in use (and thus passively comply), do we know what data it collects or how it’s used?

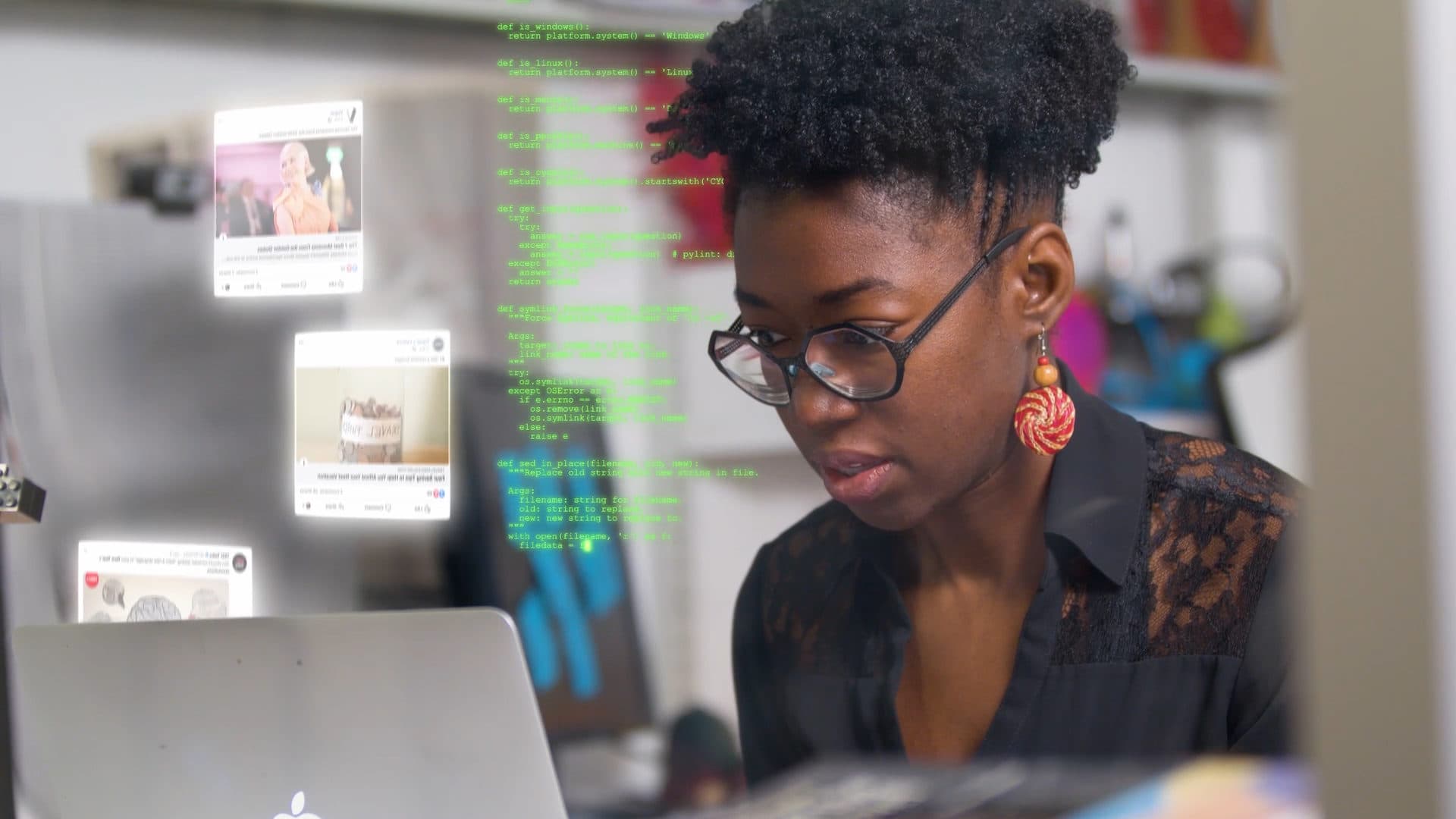

A smart new documentary, “Coded Bias,” answers with a resounding ‘nope.’ Energized by a nearly all-female lineup of researchers and activists, with MIT Ph.D. candidate Joy Buolamwini at the fore, the film condemns the ways racism and classism underpin big data’s design and applications.

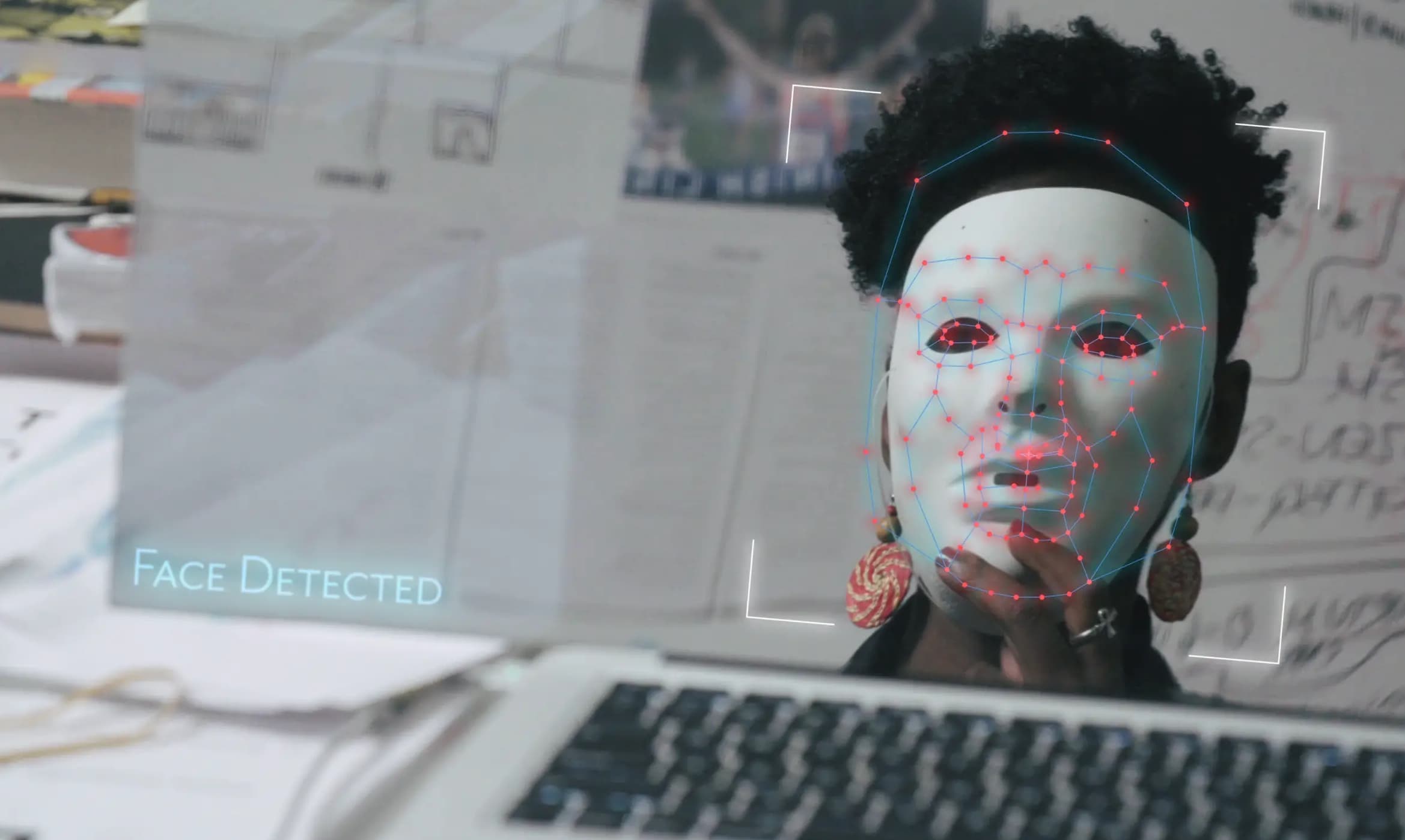

The documentary’s story originates at the MIT Media Lab with Buolamwini. She recounts how when trying out different facial recognition software for an art project, she discovered she could only be fully detected if wearing a white mask. If it sounds like a metaphor, it’s not.

After some digging, she learned that whiteness and maleness dominated facial recognition datasets and thus their accuracy, meaning that “highly melanated” women (how she describes herself in an address she gives later in the film) had the least accurate recognition rates, with light-skinned men having the highest rates. “This data is showing us the inequalities that have been here,” she says in the film. The takeaway is that mechanized tools assumed to be faultless are actually full of them.

From an AI recruiting tool that rejected all resumes from women or an algorithm that prioritized white patients over sicker Black patients, “Coded Bias” gives examples of the many ways biased AI has infiltrated day-to-day life. Designed and deployed by predominantly white men, these codes often operate undetected and also without channels to protest one’s inclusion or exclusion from a dataset. It begins to dawn on Buolamwini that “we need laws,” because hard-earned civil rights are at risk. All of this leads her to create the Algorithmic Justice League to protect those rights. (The movement’s icon turns a mask into a shield. Get the 2021 Halloween costumes ready now.)

“Coded Bias” adds an urgent nonfiction reference point to fictional series like “Watchmen” for how it confronts ideas about racist policing and hidden identities as well as “The Queen’s Gambit,” which insists viewers get beyond marveling over a “girl” chess prodigy. Success at chess, “Coded Bias” points out, was one of the initial ways AI programmers measured human intelligence, pitting “man” against machine.

The concept of AI can be difficult to grasp and even more difficult to depict. While Buolamwini, “Weapons of Math Destruction” author and mathematician Cathy O’Neil and others do an excellent job of simplifying the stakes through interview and narration accompanied by visual aids, the film bolsters its case with scenes that play out in real time. One of the most powerful moments takes place in London when police accost and search a 14-year-old Black boy only to discover the surveillance software wrongly identified him.

The film also travels to China where a young woman discusses the merits of the government’s “social credit” scoring system, which tracks and rates behaviors like unpaid debts with penalties that include restricted travel. This particular young woman appreciates the convenience of not having to “rely on her own senses” to figure out who to befriend. Americans can be all too comfy with the idea that the threat of big data lies elsewhere. But the film asserts that in reality, rampant American “bots” hide behind a curtain of capitalism which has little regulation.

Clearly frustrated by the state of things, O’Neil wonders, “Why aren’t we using our constitutional right to due process to push back against all kinds of algorithms?” She and countless others have lost patience with what she calls the asymmetry of power between who owns the code and those who suffer “algorithmic harm.”

Buolamwini and O’Neil are engaging, relatable, and accessorize cooler than rock stars. Director Shalini Kantayya gives a glimpse into their private lives but that’s not the film’s priority. As much as I’d like more hangout time with them, I appreciate that she treats her subjects as experts first and foremost.

This decision also adds impact when Buolamwini lets down her guard a bit during a friendly strategy meeting with other members of the Algorithmic Justice League. “I don’t know about you guys, but I’m underestimated so much,” she says. It’s part of being a woman of color in technology to “expect to be discredited, expect your research to be dismissed.”

At this point, it’s easy to be on board with Buolamwini and be ticked off right along with her. Because “Coded Bias” makes an airtight case based on Buolamwini’s research, dismissing her – and her call to action — becomes impossible.

“Coded Bias” opens on Wednesday, Nov. 18, at the Coolidge Corner Theatre’s Virtual Screening Room. On Thursday, Nov. 19 at 8:30 p.m., CNN commentator Van Jones will moderate a livestreamed discussion with director Shalini Kantayya, Dr. Joy Buolamwini (founder, Algorithmic Justice League), Dr. Safiya Umoja Noble (author, “Algorithms of Oppression”), Clare Garvie (researcher, The Perpetual Line-Up), Kade Crockford (director, Technology for Liberty Program at ACLU) and Alvaro Bedoya (founding director, Center on Privacy and Technology at Georgetown Law).