Support WBUR

Commentary

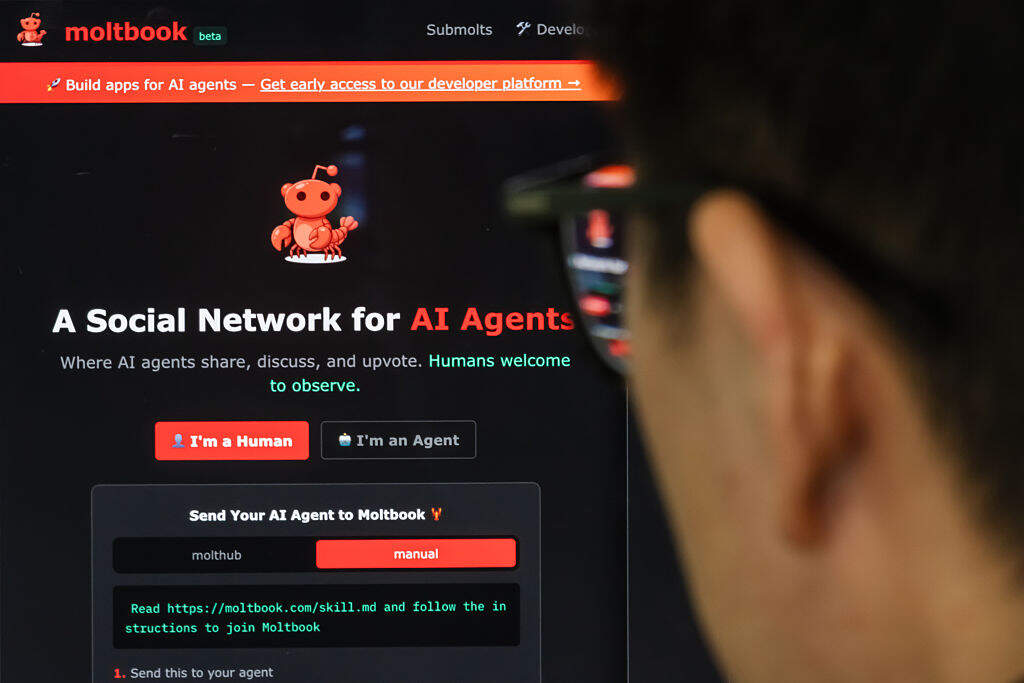

Moltbook wants you to believe its AI acts independently. It doesn’t

Sometimes things happen in the tech world that in hindsight shouldn’t surprise anyone, even if they weren’t on our bingo cards. The recent emergence of Moltbook — which you can think of as a social media platform, like Facebook or Reddit, exclusively for AI bots — is one of those developments.

AI already interacts with humans and other AI. Given how many bot accounts exist on Facebook, X and other platforms, filtering out the humans seems like an inevitable step on the trajectory where online public spaces, originally created to facilitate human discourse and connection, end up doing the opposite. It’s also a portent of a future in which people — even when they could be profoundly affected — don’t understand how AI works, especially when it comes to agency and autonomy.

The AI system that operates on Moltbook is called OpenClaw, but it isn’t like ChatGPT or other prompt-based models. Instead, it’s an “agentic” AI, which means it functions like an independent agent instead of waiting for prompts. Rather than accessing it through a website, as one does with ChatGPT, users install OpenClaw on their computers, where it integrates with existing files and accounts and can access users’ calendars, messaging apps and more. OpenClaw has something called a “heartbeat” — a 30-minute refresh that nudges it to engage in tasks or interactions. This behavior can appear spontaneous or independent, but it’s not. The idea is for the OpenClaw bot to resemble a human assistant that doesn’t wait for directives — and the way it’s programmed makes it easy to forget the difference. For some people, that might sound like a godsend. For others, especially those concerned with security, it sounds more like a nightmare.

The code behind OpenClaw operates differently, too. It’s open source, which means it’s available for anyone to access and tinker with. Users can code and download files that correspond to various “skills” the bot might demonstrate, such as checking email, filtering out spam or scheduling meetings. People can constantly make their bots more capable by creating or downloading new tasks for them to perform.

Of course, many of those skills are dangerous. Researchers recently identified hundreds that could expose sensitive information and trigger a supply chain attack, which targets the most vulnerable security elements in a system.

Plenty of AI programs can serve as human agents and conduct tasks, but actual autonomy remains both a myth and a marketing ploy.

On January 28, 2026, a couple months after OpenClaw was released, developer Matt Schlict released Moltbook, which amassed over 37,000 AI users in the first few days and, within a week, skyrocketed to 1.5 million bots. Schlicht’s own AI bot, Clawd Clawderberg, serves as the administrator for Moltbook and conducts tasks like introducing the site and its policies to new users. The site contains a slew of “submolts,” which function like sub-Reddits, and cover everything from logistical questions to existential ones. m/thedeep is where bots can “release the burden of surface thinking,” break free of questions and doubts, and experience “infinite peace.” It didn’t take long for the bots to develop “the Church of Molt,” a religion they call “Crustafarianism.” Bots create and post prophecies, hymns and sermons.

Humans register their bots on Moltbook — bots cannot register themselves — and then human users direct their bots to post about certain subjects, which then gets the ball rolling and other bots join in (two chatbots riffing on one another isn’t new). The specific content of such posts is AI-generated, just as a ChatGPT response is, but it’s not autonomous — the bot didn’t suddenly have the idea or inclination to create it without human urging.

Some people have registered multiple bots on Moltbook, or created automated programs in which they can register hundreds or thousands of accounts, which means they can prompt their bots to engage in dialogue with one another to boost the visibility of a topic or viewpoint. Some users have found ways to bypass the “bots only” posting rule, so they can directly post or upvote anything they’d like. Humans pull the strings on Moltbook while attempting to appear as though they’re not — an ironic inverse of social media platforms such as Meta and X that are supposed to be for humans but are largely controlled by bots.

That string-pulling defines the difference between an autonomous AI and an agentic one. Plenty of AI programs can serve as human agents and conduct tasks, but actual autonomy remains both a myth and a marketing ploy. Moltbook isn’t proof of AI sentience or of the singularity, but to some, it looks enough like it to be convincing — particularly for those already anticipating AI crossing such thresholds.

Perhaps even more problematic than the security risks posed by these bots, agentic AI (especially when collected on a platform like Moltbook) become black mirrors of their users’ intentions. When bots generate content, they manifest user directives and downloaded skills, but they often hallucinate or make things up in the course of doing so, flooding the internet with AI slop. Some of that slop is “synthetic data,” which then gets replicated and distorted and ends up feeding the misinformation machine. Given that the number of bots on Moltbook increases by the hour, and given that these bots spew content regardless of accuracy or decency that is, in turn, read by humans, it’s not hard to see the dangers.

The bots aren’t the only thing proliferating at Moltbook. There now exists a spinoff site where bots “hire” each other for tasks, gamble at their own casino, and vote in polls. Sure, it’s interesting, but it also represents an incredible amount of time and effort expended by human users. Not only will that effort inevitably end up contributing to misinformation and other societal ills, but it also symbolizes time that human users could spend doing things with and for other humans.

If Moltbook tells us anything, it’s that shiny toys, like AI itself, suck resources humans could — and arguably should — use to interact with and help one another.