The doctor is in — or is it AI?

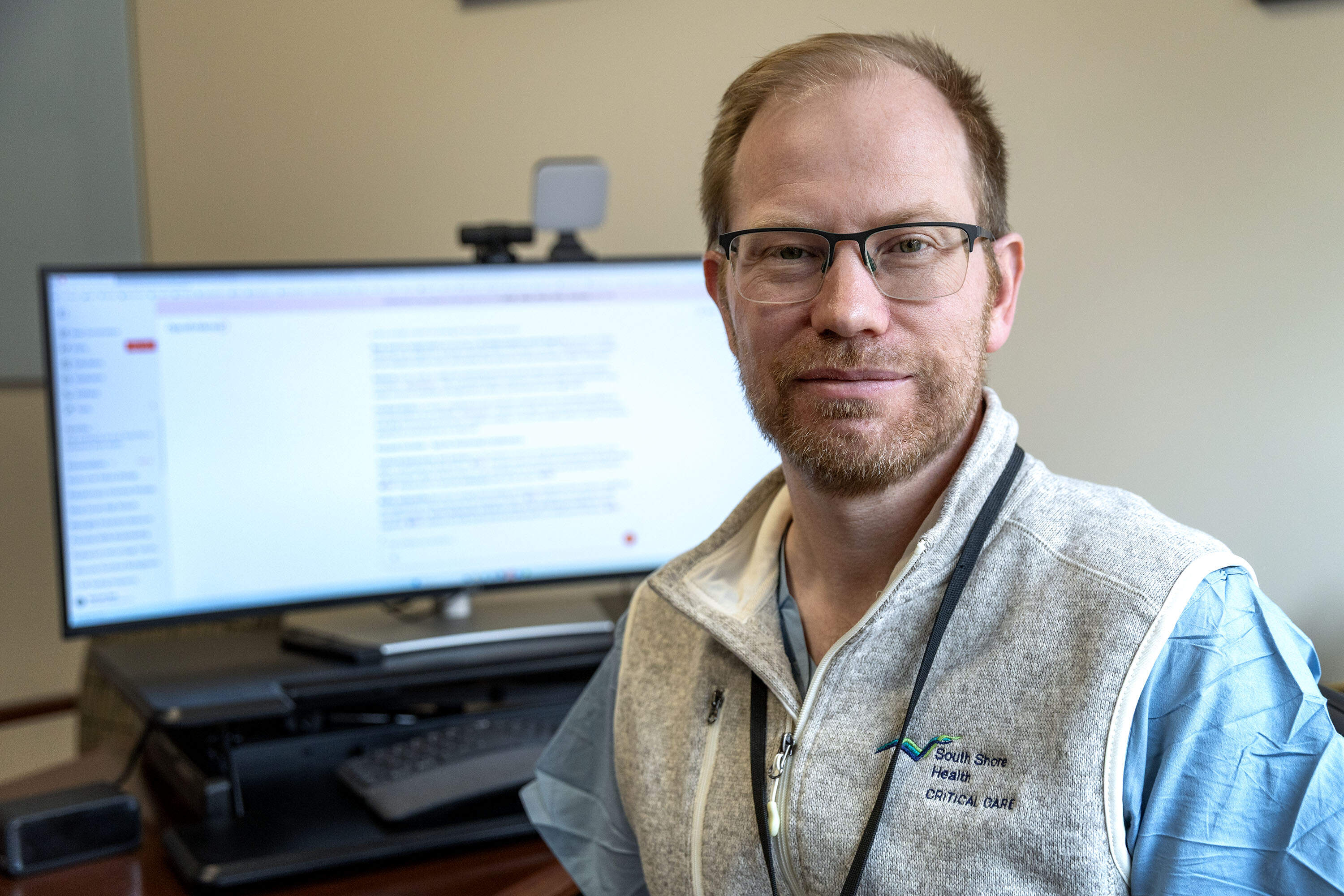

South Shore Hospital's Dr. Sam Ash quickly typed details into his computer about a new patient brought to the critical care unit after she was discovered unconscious in a bathroom, surrounded by pill bottles.

The 40-year-old woman had a history of mental illness, and the medications found beside her included a blood pressure drug, an anti-anxiety medication, the antipsychotic Seroquel and the anti-seizure drug Depakote.

The Depakote stood out to Ash. At high enough levels, he said, it's toxic and can require dialysis to stop it from damaging a person's organs.

"This is something I know, but off the top of my head I can't remember the exact level," said Ash, who treats patients and also serves as the Weymouth hospital's vice president of information technology and innovation.

Ash pulled up OpenEvidence, an artificial intelligence platform that doctors are increasingly using to help diagnose patients. Often called "ChatGPT for doctors," OpenEvidence is just one of the powerful AI tools medical workers are adopting to assist them in caring for patients.

Many doctors are employing AI scribes to take notes during their patient visits. But, diagnostic AI tools are also entering more and more doctors' offices and hospitals, from inventions that sharpen CT scans to generative AI chatbots that analyze vast troves of medical data and spit back guidance.

A recent survey by the American Medical Association found about 80% of doctors used AI on the job last year — double the rate from three years ago. Analysts estimate the global market for AI in healthcare is worth $39 billion, a figure expected to explode over the next decade.

The technology's swell in popularity within the industry is giving rise to both fears and hopes about what it will mean for patients' health.

Learning to trust the machines

Ash asked OpenEvidence to check how much Depakote was found in his patient's blood — and whether the total met the bar for intensive treatment. The answers came immediately. She didn't need dialysis.

But, the AI didn't stop there. OpenEvidence told Ash to look at the patient's heart and breathing, and it nudged him to ask if there were other medications she may have taken.

"It sort of prompts you to remember — don't get fixated on this idea of the Depakote — remember to check to make sure that she didn't take something else where the bottle wasn't seen," Ash said. "This is part of our protocol, and so it's something that we're doing already, but it's sort of a helpful reminder."

OpenEvidence was co-founded by an AI entrepreneur and a Harvard Ph.D. student. It's free to use, funded by advertising, and has experienced meteoric growth over the past year. The company has partnered with organizations such as the New England Journal of Medicine. OpenEvidence estimates at least 40% of all U.S. doctors use its AI tool.

Ash said one benefit is that it provides links to primary sources of information, such as published medical studies. He compared it to a medical reference book with easily clickable supporting research.

And Ash emphasized it's not the only tool he uses to help diagnose patients.

"I'm looking at multiple sources," Ash said. "I'm looking at OpenEvidence to start, but then I'm confirming my decision and my information with either the primary source literature, or something that's been around and trusted and validated."

Many doctors said they don't completely trust AI. In the American Medical Association's survey, one of their biggest concerns was keeping patients' information confidential.

South Shore's policy is that doctors should not enter protected patient details into OpenEvidence, although Ash said it's possible doctors could make mistakes. But he said unlike other online tools, OpenEvidence complies with federal medical privacy laws. And without it, he said people will resort to less secure platforms.

"People are going to use Google or people are going to use ChatGPT, and that we really don't want," Ash said, because then "we really don't have confidence that what they're putting into those search tools is going to remain private in any way."

Ash said he realizes many people may be uncomfortable with doctors relying on a machine to help diagnose their health problems. But, he said doctors are ultimately responsible — and making the decisions — for a patient's care.

The AI does have its limitations, Ash said. It often gets stumped by complex medical cases, the kind where few references exist online for it to draw from.

"The jury is a little bit still out as to how useful it is in these settings," Ash said. "I sometimes say to some of my patients, 'Even though it's 2026, sometimes we still put up our hands and say, 'I'm just not sure.' "

"People are going to use Google or people are going to use ChatGPT, and that we really don't want."

Dr. Sam Ash

And the AI may not be sure either. Researchers have noted there are many documented examples where a range of generative AI tools — not OpenEvidence — presented falsehoods as facts, a phenomenon called "hallucinations."

A study from Mass General Brigham researchers last year found some popular AI chatbots operated by OpenAI, the company that owns ChatGPT, and Meta, prioritized being helpful over being accurate when answering medical questions. The researchers reported the tools were not able to identify when a query was illogical, which sometimes resulted in false replies. But the researchers also found the tools could be trained to improve their reasoning.

Despite the concerns, several patient safety experts said they see great promise in AI to reduce medical errors. Mistakes made by humans are already a significant problem in healthcare. A study led by researchers at Brigham and Women's Hospital that was published last year found a harmful diagnostic error occurred in one out of every 14 hospitalized patients.

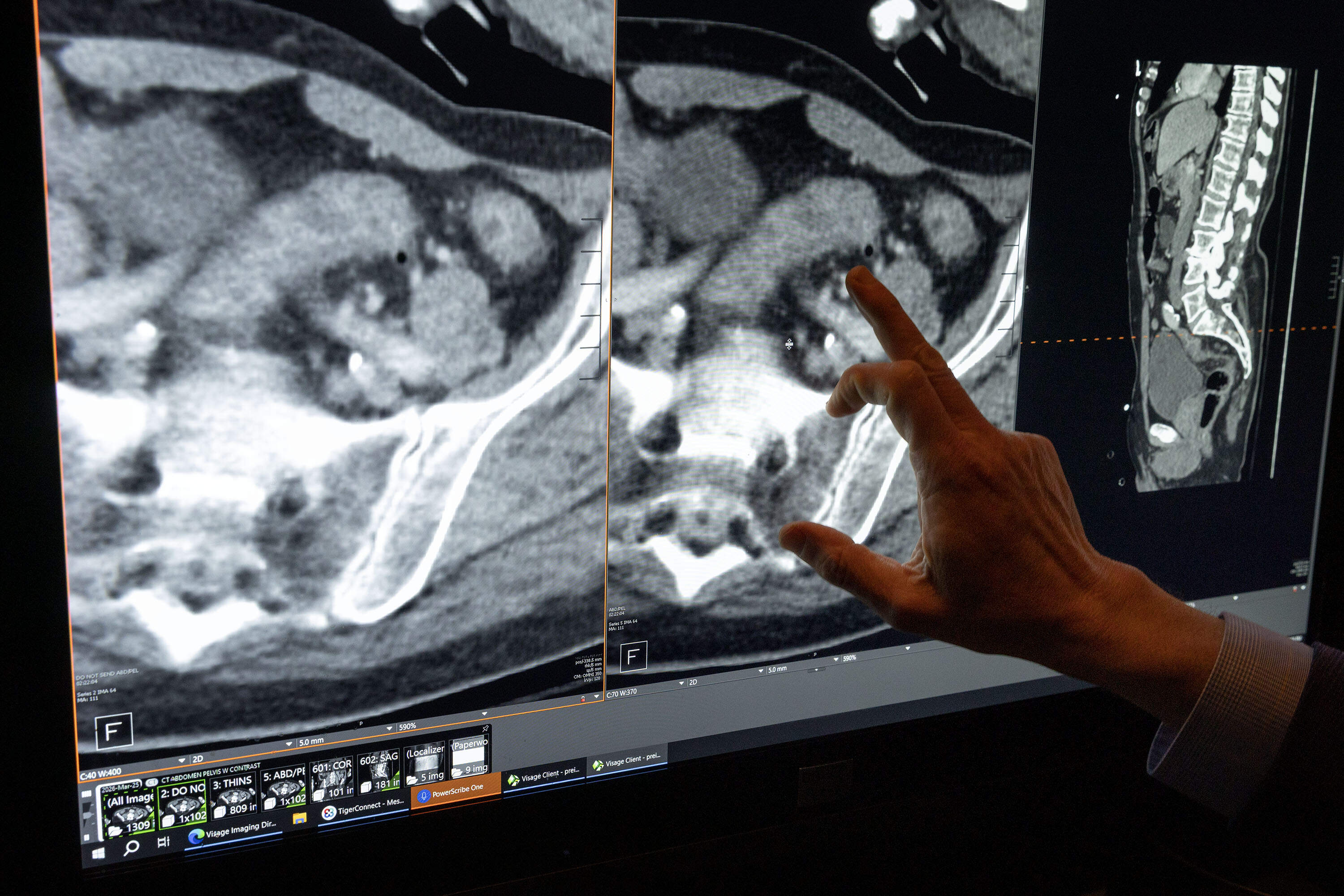

Another area where AI shines, these experts said, is radiology, which involves analyzing images such as X-rays, CT scans and mammograms — and completing those tasks quickly and correctly.

Many times in radiology, " accuracy actually gets better if you use AI than if you don't, in part because it makes the whole process so much more efficient," said Dr. David Bates, executive director of the Center for Patient Safety Research and Practice at Brigham and Women’s.

Many Massachusetts hospitals are using AI in their radiology departments. At South Shore Hospital, doctors use a tool that can enhance CT scan images, while reducing patients' exposure to radiation.

Dr. Ori Preis, chair of the hospital's diagnostic imaging department, held up two scans of a patient's abdomen, one generated using AI, the other without. The image generated with AI was much clearer.

"The AI — through machine learning — has learned how to identify noise versus actual image and clean up the image, so we can obtain the same quality images at lower radiation doses," Preis said.

Preis said the AI often can get a clear image using 30-50% less radiation than a typical scan.

'AI is going to run ahead of us'

Not all healthcare workers are enthusiastic. Some worry the AI wave is flooding their systems with technology they weren't properly trained to use.

Joe-Ann Fergus, executive director of the Massachusetts Nurses Association, said nurses should be more involved in how AI is implemented in healthcare workplaces. She said her members are concerned about several issues, including potential bias in the data used to train AI. Fergus said patients in groups that are underrepresented in research — such as Black, Latino and Indigenous people, LGTBQ patients and women — might be left out or misdiagnosed.

"People are buying these tools off the shelf, and they are trained on a population that is not theirs," Fergus said. "And small changes make a big difference, especially in places like here in our state, where we already know we have disparities in outcomes. Are we building in disparities and bias into the systems?"

Fergus said nurses also worry about whether they might be held responsible for faulty AI information or recommendations, and whether humans could blunt their skills with patients if they rely too heavily on AI.

"We're removing the humans from the process even though we’re saying that that’s not the case," Fergus said. "We're never, in the system, stopping long enough to ask the questions about why and how. It’s always just the shiniest new thing we’re introducing, and I feel like AI is going to run ahead of us."

Healthcare experts acknowledged AI will require new oversight — by hospital administrators and government. They said the technology is evolving quickly and needs guardrails to ensure it improves patient health.

The state's largest hospital systems, Mass General Brigham, Beth Israel Lahey Health and UMass Memorial Health, have set up boards and offices devoted to setting policy and overseeing how staff use AI. There is little comprehensive federal or state oversight.

"It is happening really fast and the governance piece is very up in the air right now," said Dr. Tejal Gandhi, chief safety and transformation officer with the healthcare management company Press Ganey. "There needs to be more guidance in terms of what should be happening at which level and how regulated should it be."

At UMass Memorial Health in Worcester, President and CEO Dr. Eric Dickson said the hospital system is making a $100 million investment in AI and building its own data platform to train its AI tools. Dickson expects that in the next year and a half, the system will go from using about 60 AI tools to 300.

Dickson, who has completed AI training programs at Stanford and Harvard, admits he's an enthusiast. He considers AI "augmented," not "artificial," intelligence. On his conference room table is a small black plastic figure labeled "AI Ninja," which he hands out to doctors who have also completed AI trainings.

Dickson said he plans to offer training in various aspects of AI to 21,000 UMass workers. Without it, he said, those healthcare professionals will be left behind. And he said AI should always operate with humans in the lead.

“ AI isn't a choice anymore,” Dickson said. “It's coming. We can't shut it off and so our job as humans is to figure out how to leverage it for the benefit of our patients — in my particular situation — or society as a whole.”

This series is funded in part by a grant from the NIHCM Foundation.

This segment aired on May 4, 2026.