Radio You Can Watch: Turning A Broadcast Story Into Video For Smart Displays

How might WBUR's on-air reporting look on a smart display?

Project CITRUS set out to explore that question as WBUR works toward a strategy for these devices. The primary objective of this exploration was twofold:

- Gain a better understanding of the workflow needed to create short, no-frills news videos.

- Gain a better understanding of how to deliver video on Amazon Alexa devices with screens.

Making A Simple Video

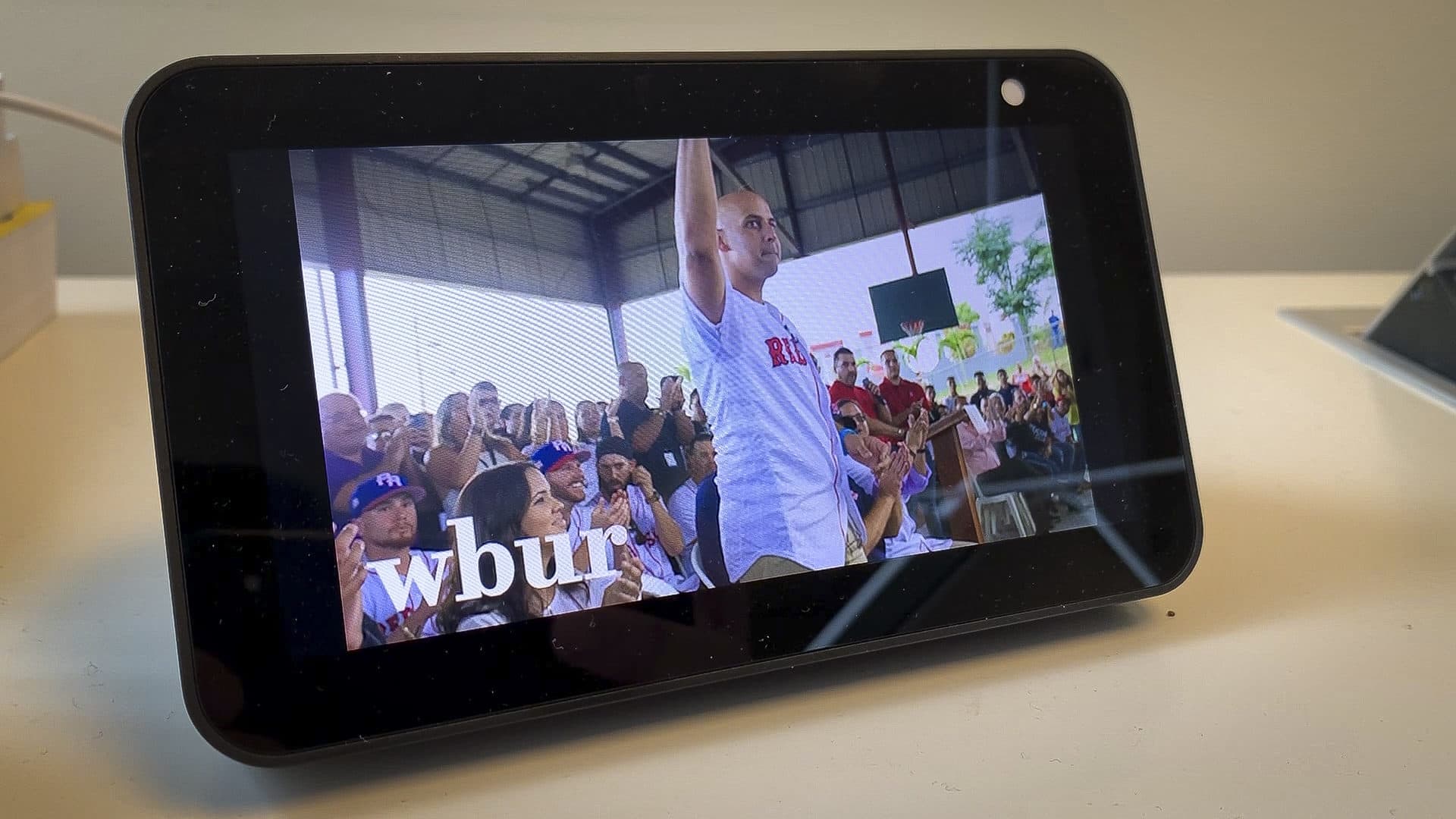

Video creation came first. The rough game plan involved picking a past WBUR broadcast story — in this case one about a Boston Red Sox trip to Puerto Rico after Hurricane Maria — and pairing it with images to make a basic video. I decided to avoid using WBUR's newscast (quick-hitting headline news reports that air on the radio throughout the day) as video source material, even though NPR has blazed a trail with its award-winning Visual Newscast.

As opposed to the national stories featured in NPR's newscast — which are routinely covered by services like Getty Images and Storyful — the more niche, local coverage within WBUR newscasts frequently lacks a strong, easy-to-find visual accompaniment (a 10-second reader about power outages on Cape Cod, for instance).

Luckily, because WBUR photographer Jesse Costa traveled with reporter Simón Rios to Puerto Rico to cover the team's journey, great photos were in abundance. I started by downloading the Puerto Rico story audio and as many relevant images as I could find. But right off the bat, I encountered a problem: The absolute maximum screen time I could safely get out of a single image before it became stale for the viewer was about 6 seconds. However the full-length radio piece clocked in at 4 minutes and 24 seconds, which meant I would need to source as many as 50 separate images.

That simple math made my decision for me. The radio story would need to be cut — significantly.

After excising as much as I could without sacrificing the story's editorial integrity, I had a 90-second MP3 that was much more workable for video. With the finished audio and images in hand, I fired up Adobe Premiere and began building a timeline. Creating the actual video out of those constituent parts wasn't overly complex: I standardized the amount of screen time each image received, applied a basic animation effect to them using keyframes, and inserted a prominent WBUR logo in the bottom-left corner for good measure (hashtag: branding).

Playback From A Device

We needed the finished video file to be small for uploading to Amazon S3. To that end, I wound up using Premiere's "Twitter 720p" export preset, which yielded a 25-megabyte MP4 file. From there, I handed off the baton so that the video could be summoned from our team's Echo Show 5.

Since this was still very proof-of-concept, it didn't make sense to create a standalone Alexa skill, just to test out these videos. Instead, we used a pre-existing beta skill and glued on an extra intent, with just a single, intuitive utterance (e.g., "Cora," the manager of the Red Sox, who led the trip). A request for this intent returns the MP4 served from S3, via the VideoApp interface.

Where To Next?

It's not feasible right now for WBUR to implement a smart-display strategy on par with, say, the likes of NPR and its Visual Newscast. We lack the staffing and resources required, and we're skeptical at this stage about the ultimate payoff for such a major lift. (By contrast, NPR — thanks to its scale — can actively promote a Visual Newscast post-roll as an enticing opportunity for advertisers.)

Our experiment did however get us thinking about ways to work smarter instead of harder — figuring out how to make the video content WBUR is already producing for digital stories, social media, live events, etc. more easily accessible on smart displays, for instance. (And speaking of accessible, any fully realized strategy in this area must include some kind of closed captions.)

Could videos get mixed in with segmented audio content and surfaced within a personalized stream like NPR One, or on a future WBUR-owned platform? Could we design a voice experience that allows a user to ask to "play the latest video from WBUR" on their smart display? Can we work with Amazon and Google to get WBUR video content more prominent placement within smart-display "carousels" that prompt the user to ask to play a video?

Suffice it to say, our scrum board is now a couple tickets richer.