Support WBUR

How to redesign schools for the AI age

AI is doing students’ homework, writing their essays — and probably replacing a lot of their future jobs. Is it time to rethink what schools are for?

Guest

Linda Darling-Hammond, founding president and chief knowledge officer at the Learning Policy Institute. Professor emeritus at Stanford University.

Rebecca Winthrop, senior fellow and director of the Center for Universal Education at the Brookings Institution. Co-author of the recent book “The Disengaged Teen: Helping Kids Learn Better, Feel Better, and Live Better.”

Also Featured

Kayla Jefferson, Social studies teacher in New York City public schools.

Ludrick Cooper, Eighth grade English language arts teacher in Florence, South Carolina.

Michael Matsuda, Superintendent of the Anaheim Union High School District in California.

Transcript

Part I

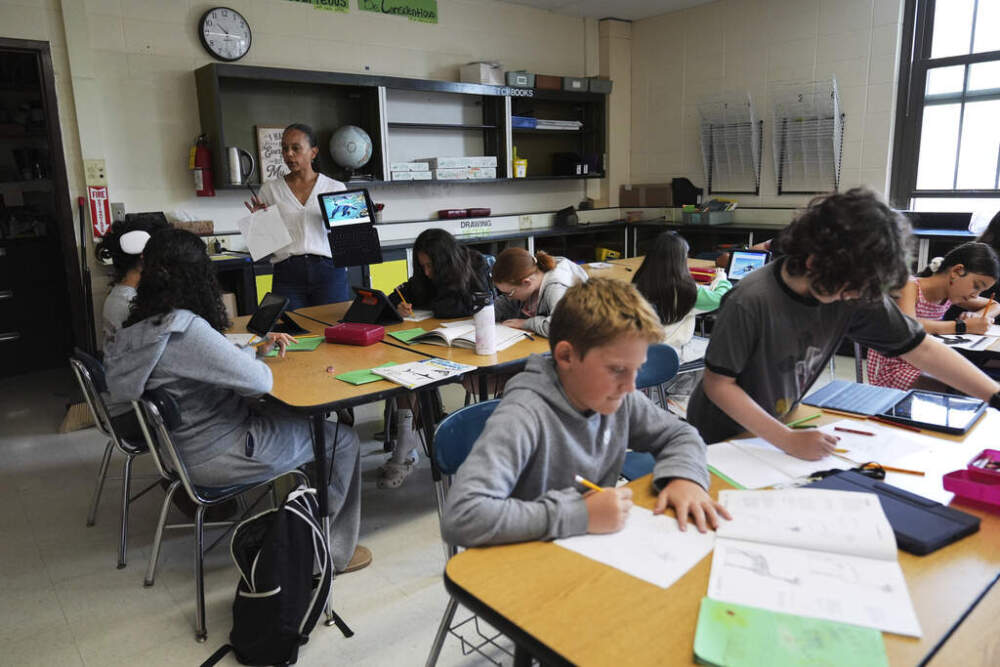

MEGHNA CHAKRABARTI: It's back to school this week for Kayla Jefferson. She's a social studies teacher at Franklin Delano Roosevelt High School in Brooklyn, New York.

KAYLA JEFFERSON: I said it off from the start from September. I know AI, don't try to get around me, like, I understand it. So they know I don't play.

CHAKRABARTI: This year she's teaching seniors, and Kayla has a rule. No using AI to do assignments, not for classwork, not for homework. No AI.

JEFFERSON: It's hard. Because a lot of them really do use AI for their other classes, so parts of their brain are really not like chugging. So it's hard for them to sit in groups and talk to each other and discuss things. And it's so easy to tell that they don't know what they're saying or what they're typing, or even what is on the screen.

CHAKRABARTI: In Florence, South Carolina, Ludrick Cooper has been back in the classroom for more than a month already. He teaches English language arts to eighth graders at Williams Middle School.

COOPER: First week of school. I told them, it's nothing wrong with using AI, you just can't use it in class, try to cheat, plagiarize. But it's okay to use it at home if you want to learn. Because when I was growing up, the way I learned was my parents had an encyclopedia set, and that's what I kind of share with the kids. I'm like, AI is like the new encyclopedias.

CHAKRABARTI: Ludrick and Kayla are far from the only teachers wrestling with how to handle AI in the classroom.

The technology can now do students' homework assignments and write their essays. It can summarize complicated books and do math problem sets, which led us here at On Point to wonder. Since most students today obviously do not learn skills that they needed to survive, say back in the 19th century, they don't learn those in school anymore.

There's no point. What should they be learning today to survive and succeed in the 21st century in the age of AI? Now, this is about much more than how should AI tools be used in the classroom. It's about the underlying question of, what should the purpose and goal of education be in the age of AI? What is school for anymore?

Joining us now is Linda Darling-Hammond. She's the founding President and Chief Knowledge Officer at the Learning Policy Institute, and also a Professor Emerita at Stanford University. Linda, welcome to On Point.

LINDA DARLING-HAMMOND: Thank you. Good to be here.

CHAKRABARTI: Okay, so you have been working in education for a long time.

Given that experience, how much of a disruptive force do you see AI in, let's just focus on K-12 today, K-12 education. And if so, why?

DARLING-HAMMOND: It is a disruptive force, not only in the classroom. And you played some interesting quotes from teachers who are experiencing it, but also it's a disruptive force in the education policy sphere, which is causing people to ask the question that you started with.

What is school for now? It both provides a lot of the information that people can use in creative ways for supporting teaching. It does not need to be a replacement for teaching as some fear and have experienced. But also, we have a factory model system that was created a hundred years ago with a curriculum design that was set in place in 1892 by something called the Committee of 10.

And it has needed to change for a while. Many schools have been redesigning, but we have not had an impetus for substantial change until AI hit, and now you see states trying to change.

CHAKRABARTI: Okay. In that case then AI could be the positive disruptive force we've needed for, I don't know, more than a half century.

But let's talk more about this factory model of education here, because I'd love to actually put some details on it. What happened in 1892? Why was that committee arranged? What did they decide?

DARLING-HAMMOND: Back in 1892, the National Education Association has established a committee of 10 who set the curriculum, and you'll recognize it in a lot of the requirements for graduation today.

Algebra, geometry, and Algebra II, biology, chemistry and physics, in that order. Alphabetical, that was adopted by schools in these siloed areas of the curriculum. This was before there was such a thing as computers or big data or even interdisciplinary problem solving. So that was one piece.

And then the factory model school was created to handle the compulsory attendance laws that were coming into place. And we moved from one room, rural schoolhouses to big warehouses, often in cities where kids were put on an assembly line with a different teacher every year. And then in middle and high school, a different teacher every 45 minutes, who were supposed to stamp them with a lesson that was standardized and prescribed.

Some kids would fall off the assembly line, some would make it through, and that would be the selecting and sorting function of the schools. That's what we've been working with for all of these years. Trying to make it more thoughtful, more humane, more able to deal with the kind of deeper learning kids need now.

CHAKRABARTI: You're making it sound pretty terrible. But since 1892. Okay. By the way, just as an aside, the biology, chemistry, physics, typical progression that a high school student goes through with science, you said alphabetical order. Is that literally how it was decided? In 1892.

DARLING-HAMMOND: Yeah, they wrote it down and it was written in alphabetical order and people adopted it that way.

So it's been a whole process trying to allow schools to move towards interdisciplinary sciences, towards other orders of science, how to integrate it. And we really haven't budged very much.

CHAKRABARTI: Okay. The stickiness of that is amazing to me. Now, I just do want to underscore a point you made, that it's not that nothing has changed since 1892.

You said that educators have been trying to make this fundamental process more relevant and more humane, but the structure of it hasn't changed in a hundred and what, 30, 40 years. Why do you think that is? Is the counter argument that maybe the structure's actually pretty good? So that's what contributes to its longevity.

DARLING-HAMMOND: We do have a lot of schools that have redesigned. There are thousands of schools across the country that have designed in ways that allow for more time, project-based learning, block scheduling, teaching teams that share students. And these schools get better outcomes than the factory model.

We have a lot of studies that show that it's sticking, not because it's better, but because it's rooted in a bunch of regulations and laws that states have adopted over all of this last 100years. And that's part of what needs to be shaken up for us to be able to move forward.

The idea of the sort of factory model two was a transmission curriculum where you would just learn things, write them down, spit them back on tests, and yet in this age of AI, there's an expectation that by 2030, 30% of our jobs will be discontinued. It'll be eliminated or radically changed, and the skills that people will need in that AI economy are very different from the skills that were the assumption of the schools that we have inherited.

The skills that people will need in that AI economy are very different from the skills that were the assumption of the schools that we have inherited.

Linda Darling-Hammond

CHAKRABARTI: You're referencing a famous McKinsey study from a couple of years ago that gave that astonishing 30% number regarding --

DARLING-HAMMOND: Actually, there've been like three or four studies, and they all converge on that same assumption.

CHAKRABARTI: Yeah. Okay. So this, actually, 2030 is five years from now.

It's basically today. So at the highest level then, and then we'll dig deeper throughout the rest of the show, but at the highest level. With AI rapidly transforming the world right now. Linda Darling-Hammond, what do you think the fundamental purpose of education should be in this country?

DARLING-HAMMOND: I think it has to be developing something that the HR department and Google once called learning ability. The ability to find and use information to, of course, integrate it with the knowledge that you have. We're not getting rid of knowledge in this era. But being able to use the knowledge to solve problems, to put things together, synthesize, analyze, collaborate with others to create a problem.

Solution or a product, then test it yourself and be able to improve it yourself and to actually be able to manage knowledge for the purpose of solving problems. That really, I think, is the goal is to develop learning ability and the ability to make good judgements. The human side of learning is going to be very important.

There is, of course, data literacy and there is the understanding of STEM and technologies, but there's also the unique human skills, creativity, critical thinking, emotional intelligence, the ability to work and collaborate with others. All of those are gonna be incredibly important.

CHAKRABARTI: Couldn't you argue though that the ability to think critically, to be able to find new ways to problem solve, given a base of knowledge, emotional intelligence, et cetera, that these have maybe not always, but at least over the past several decades, generation or two, these have already been the goals of education.

DARLING-HAMMOND: Yes, many have said that there are many schools, districts, states have articulated 21st century skills. And yet we are still trying to get our system to be organized, to produce those skills, on a regular equitable basis for all students.

CHAKRABARTI: But tell me more about what you mean by that.

DARLING-HAMMOND: As I said, the curriculum, our testing system is still multiple choice tests. Pick one answer out of five, which is not critical thinking. It's an artificial form of demonstrating learning. Our curriculum is still organized around transmission of information rather than the use of information to solve real world problems to a great extent.

Again, except for the schools that have really stretched out there and broken the rules, kids are learning in classrooms at desks rather than out in the world, in externships and internships and using a variety of tools. So all of those things that will allow us to really produce those kinds of skills need to be part of the norm. Rather than exception. And they need to be equitably distributed, because we have big inequalities in our educational system in terms of who gets access to a thinking curriculum.

We have big inequalities in our educational system in terms of who gets access to a thinking curriculum.

Linda Darling-Hammond

Part II

CHAKRABARTI: A little earlier we heard from Ludrick Cooper, he teaches language arts to eighth graders in South Carolina and he says critical thinking is the most important skill teachers can emphasize in the AI era.

COOPER: We're in the age now where everything is fast. Fast. I don't think they spend enough time to like critically think about certain things. It's easy to go to AI and ask the question. And boom, you got the answer. But how did you come up with that answer?

What were the steps taken to get to that answer?

It's easy to go to AI and ask the question. And boom, you got the answer. But how did you come up with that answer?

Ludrick Cooper, teacher

CHAKRABARTI: That's Ludrick Cooper, a teacher in South Carolina. I'd like to bring Rebecca Winthrop into the conversation. She's senior fellow and director at the Center for Universal Education at the Brookings Institution and co-author of the recent book, The Disengaged Teen: Helping Kids Learn Better, Feel Better, and Live Better.

Rebecca Winthrop, welcome to On Point.

REBECCA WINTHROP: Thanks for having me.

CHAKRABARTI: You heard Linda Darling-Hammond's answer to this big question of what is school even for in the age of AI.

I'd love to hear your answer to it.

WINTHROP: I agree with a lot of what Linda has said, but the thing that I keep thinking about is the role of school in society.

One of the things that Linda didn't talk about was in the mid 1800s, why did we even want to have a school system, a common school system across the country? And one of the main reasons, and I think about one of the main activists. So this is before the committee of 10 came up with how to execute it.

But one of the main reasons Horace Mann was a big activist and he really advocated for the fact that we are a new country, a new democracy, and without schools and a shared understanding of history and a base common knowledge, we won't be able to be, have a shared citizenry that will hold our democracy together.

And I think more and more that we've lost that purpose of education, but it is going to be increasingly important in our current, very polarized world. And in a world where the purpose that had overtaken education, which was really achievement, knowledge, acquisition, and frankly for a purpose of ranking and sorting to get to higher education.

With generative AI, a lot of that sort of achievement culture, and as Linda said, acquiring knowledge and spitting it back out, is mute and irrelevant. Because AI does it. So how can we get back to a purpose of education where kids are learning, they are thinking critically, but towards an ethical end? And end around what does it mean to be a constructive citizen of your neighborhood, your family, your community, and your country.

How can we get back to a purpose of education where kids are learning, they are thinking critically, but towards an ethical end?

Rebecca Winthrop

CHAKRABARTI: We'll get to all those points here in just a second, but both of you have mentioned knowledge. And I take a slightly different view than both of you. I actually think knowledge should become even more important, the knowledge acquisition in schools. Because I understand why you're linking it to acquiring knowledge and then spitting it back out.

Maybe the spitting it back out part and the way we measure how kids spit that knowledge back out is very lacking. But I would say that fundamental knowledge acquisition, learning how the world works, instead of the old-fashioned skills acquisition, which I think has been a focus in the past, what, 20, 30 years, we'll become even more important with AI.

Because how are you supposed to judge what the AI is spitting back out to you if you don't have an adequate knowledge base, like in any subject that you have an interest in. Linda, let me turn that one to you first.

DARLING-HAMMOND: Yeah. And I totally agree with you, is we still need a structure and a framework for acquiring knowledge. The question is how do you acquire it and how do you use it? And I think you pegged that with the question, is it just spitting it back out in factoid?

We still need a structure and a framework for acquiring knowledge. The question is, how do you acquire it and how do you use it?

Linda Darling-Hammond

Is it using knowledge in ways that you're applying it, deeply understanding it and able to then bring a critical eye to it? So in schools that are really organized around project-based learning, for example, where the way in which you use the knowledge is to put it together with other kinds of knowledge, to try to answer a question, try to solve a problem, brings a different kind of learning.

And the schools I mentioned that have been really redesigning assess that knowledge in ways that are really applied, as do other countries, by the way. Where you will find in Australia, New Zealand, the UK, Singapore, kids are actually conducting a scientific investigation to demonstrate their science learning, rather than memorizing a set of disconnected facts that they will forget and not be able to apply later.

So how you learn it and how you apply it is very much a part of this. And as you noted, with critical thinking being what you have to bring to the AI. That has to become the starting and ending point for much of the learning.

CHAKRABARTI: Okay. So that Rebecca, that links to something Linda had also said earlier, because I think this is why the conversation actually people missed the point when they talk about how AI tools should be used in the classroom.

Because the examples that Linda gave us, a lot of the reasons why we're doing that sort of okay, you memorize in chemistry, how bonds form between atoms. And then you take a test where you show that you've memorized that. But that is a structure that was put in place because of educational policy, right?

Like showing what kids can achieve on paper, on tests, is not necessarily something that individual schools are choosing to do, their states are choosing to do that. So could you think AI and the reality of the transformational change that it will bring could force that educational policy change?

WINTHROP: I am really glad you brought up first, Meghna, the point on content. I would not want to be misinterpreted as saying content doesn't matter, but I'm talking about the purpose for which we are mastering content. And currently in the current system, the traditional system, kids master content to quickly demonstrate that they know it, pass tests.

And really, it's a gatekeeping achievement unlocking piece. And it's for higher education, sorting and ranking. And it's not that content isn't pertinent, important. I had put on my, I have a little LinkedIn newsletter and had said, I'm now obsessed with content. Whereas 10 years ago I was obsessed with skills.

Because I thought kids were more obsessed. Schools were more obsessed with content than skills. I do think that as you both have been talking about earlier in the episode, there is a small window of opportunity, or which should say a narrow path in which AI could unlock really important transformation in policy.

There is a small window of opportunity ... a narrow path in which AI could unlock really important transformation in policy.

Rebecca Winthrop

As you say, it's often not the classroom teacher that is holding things back. They're really squished from the top, from policy and standards, and actually, frankly, Meghna squished from the bottom, from demands from parents and families who are well-meaning, but anxious. And so if we could all get together and say, what we want is an education system where you do acquire content, but not for just a short-term demonstration, on a quiz or a test periodically, but to, as Linda said, solve problems.

Work together and we will assess how you do that, and make sure you strive for excellence, but in a real-world context. That is very different purpose where you pair knowledge acquisition with knowledge application. And AI could be the forcing mechanism that unlocks that.

Because it does in a way just ultimately really undercut and hollow out that sort of ranking and sorting purpose around what I call, and my co-author Jenny Anderson, the age of achievement. And I think there is a possibility for that path to happen if we all work together and try very hard. I do not think it's guaranteed though.

I think there's multiple other paths that could be disastrous, where AI really hollows out kids' thinking and emotional wellbeing and social skills.

There's multiple other paths that could be disastrous, where AI really hollows out kids' thinking and emotional wellbeing and social skills.

Rebecca Winthrop

CHAKRABARTI: Okay, so let me ask both of you something else. Because much earlier in the show, we played a clip of a teacher saying, sometimes students, they're just like asking out loud, why am I even here?

Because like the large language models can do so much, that they're ostensibly supposed to learn in the classroom. So kids are already very highly aware of that and that question of, why am I even here? Coming from, I don't know, like a fifth grader. Really sticks with me and so Linda, just to take it back into the classroom for a moment, we're bouncing around between levels here.

What can or should teachers be doing to answer that question through what happens in the classroom?

DARLING-HAMMOND: Yeah. It's both what happens in the classroom and then what happens beyond the classroom. We have in California, we have hundreds of schools, academies that go under the banner of linked learning.

And in those, students are studying, for example, at Life Academy, they're studying health professions, medical science, bioscience. They are doing internships at the local hospitals. They're designing strategies or tools for people with disabilities that will allow them to be able to function more in the world.

They're applying their math knowledge, their physics knowledge, their biology knowledge to real world problems, and they don't ask the question, Why am I here? They know why they're there, and in settings where kids have the opportunities to use what they're learning in civic engagement, I want to really reinforce Rebecca's point about how important schools are for developing citizenship.

Whether they're solving problems in their community, whether they're applying their knowledge, they're not just sitting and spitting it back. They don't ask that question. And so that's part of it. Is how do we design schools much more flexibly for those activities? The other thing is how do we make AI a focus of critical thinking?

For example, one of your teachers early on in the show noted that AI is often wrong, and those of us who use it, know that you have to check it. But imagine as an assignment where kids ask AI the answer to some question, and then their job is to check the sources, the references, figure out where they got it right or wrong, or whether it's questionable, and then argue that through.

And then the assignment might be to write about the shortcomings of AI, or where AI needs to get better. But using these tools in ways that put the student in a critical learning pose rather than substituting learning, substituting what they would learn by asking AI to do it for them.

CHAKRABARTI: Okay. I promise both of you that I do want to dive more deeply into the sort of civic purpose of school in the last part of the show. But let's just continue to focus on the quote-unquote academic side. Rebecca, I'm hearing both of you say over and over again the importance of getting kids into a learning mode, a critical thinking mode, an engagement mode that also reveals the relevance of what kids are learning to the larger world.

I think one of you, I can't remember who, called it also explorer mode, but we already have evidence that, what, just a tiny fraction of middle and high school students right now are regularly in explorer mode in classrooms. Rebecca?

WINTHROP: Yes. So my co-author Jenny Anderson and I, a couple years ago went on a deep dive around why kids don't like school.

And one of the main things we found is that they're super disengaged. And we see that in chronic absenteeism levels, mental health, we see it in poor academic performance. And we learned that it's actually much more nuanced than a kid is engaged or a kid is disengaged. The other thing we learned is that grades are misleading.

They only tell half of the story. You can have kids with Straight A's who are pretty disengaged, and we found that kids show up in four modes. They're in passenger mode, coasting, doing the bare minimum, achiever mode, trying to get gold stars on everything, but often actually really fragile learners becoming risk averse.

They don't want to try anything new for fear of getting a bad grade and becoming really great followers, which is not good for an age of AI, resister mode. These are the quote-unquote problem children, avoiding and disrupting, and then explorer mode, which is where we would want kids to spend most time, this is when kids are really motivated, really engaged, they're following their curiosity, even in a very structured environment.

You can see kids asking teachers, can I write my paper on this? Or I'm going to, we heard so much from kids in achiever mode. They said, oh, I got this essay question back. I disagree. I want to write my essay on this, but I'm not going to do that. Because I think my teacher won't grade it well. Kids in explorer mode would go and say, Hey, I disagree with your framing.

I want to write it on this teacher; can I try to do it or ask if they can study with a buddy? There's many small ways to get into explorer mode and schools are just really bad at supporting that type of agency and autonomy over kids learning. We found with our partners at Transcend that less than 4% of middle school and high school kids spend regular time in explorer mode, learning and explorer mode in school.

And this is the mode that you need kids to be in to develop that learning agility, learning ability, and be navigating the world of AI.

CHAKRABARTI: If it's already 4%.

WINTHROP: Exactly.

CHAKRABARTI: And schools are really bad at that. The problem precedes AI. It sounds like you're also describing that kids just don't have adequate incentive, especially if they're not internally incentivized and a lot of kids are very naturally externally incentivized and that's okay, but if they don't have those incentives already, there's an even bigger problem.

DARLING-HAMMOND: I just want to say the school has to create the setting in which they can be in explorer mode. Go ahead Rebecca. (LAUGHS)

WINTHROP: Yeah, no just what Linda said, that we found that kids are actually all born in explore mode and we slowly beat it out of them. 75% of kids in third grade we found in our research loved school, and by the time they were in 10th grade, 25%. It had flipped.

75% of kids in third grade, we found in our research, loved school, and by the time they were in 10th grade, 25%. It had flipped.

Rebecca Winthrop

So it's really the environment. And we also found that kids can really quickly. Quickly, like a week, a month, get into explorer mode in learning conditions that marry just what Linda was talking about, knowledge acquisition with knowledge application. Okay, let me learn biology, but I'm going to go and spend a day with a biologist and try to help, figure out the problem with trees in my park.

I'm making that up. The kids absolutely can get into explorer mode. It's really, can we use AI as the final push to re-engineer the design of our teaching and learning environments that support explorer mode? Which is, as Linda said, something a lot of educationalists have been trying to do for a long time and is happening on the margins.

It just isn't at the core of the system.

Part III

CHAKRABARTI: Linda and Rebecca, just wonder if you could listen along with me to a few more educator voices.

Because we reached out to quite a few educators and heard from a lot of them as well on this issue of what is school even for? This is Alyssa in Livonia, Michigan. She's a seventh and eighth grade English language arts teacher. She says she tries to teach students not just how to write, but why we write.

ALYSSA: I often tell them that using AI to generate essays for you is really cheating yourself out of the experience of writing. There's so much you can learn about yourself, as well as the topic you are writing about, through the process of writing. What I do try to teach my students is responsible use of AI, like using it to take a text that maybe is a bit too hard and leveling it to a reading level that is more accessible.

I often tell [my students] that using AI to generate essays for you is really cheating yourself out of the experience of writing.

Alyssa, teacher

CHAKRABARTI: So that's Alyssa who listens to On Point in Livonia, Michigan. At the Anaheim Union High School District in California, students can work with an AI powered tutor. The kids named it Skrappy the dog.

MICHAEL MATSUDA: Skrappy is your tutor. That does not give you the answers. You ask it a question or ask you a question back, and did you try it this way or did you try it that way?

As a real tutor should be doing, as an educator does.

CHAKRABARTI: Michael Matsuda is the superintendent of the Anaheim Union High School District. He says Skrappy the AI tutor is part of the district's partnership with an educational technology company called eKadence, and it's customized for each student.

MATSUDA: Skrappy knows everything about you, your grades, your clubs, sports, your aspirations.

Like it might give you an example from your football team, your football game, right? And that's going to engage the kid more. We know that excellent teachers, that's what they do all the time. Because they know the kids.

CHAKRABARTI: Matsuda says all the student data is firewalled, meaning eKadence and the district can access it, but it's protected from breaches by outside actors.

Right now, Matsuda says some 75% of the Anaheim's district's teachers are using Scrappy. The district has 19 schools and about 25,000 students. Matsuda says it's too early for data on how the AI tutor is affecting student performance, but he's hopeful. The district is also launching an AI mentor through eKadence this school year, which Matsuda says, will give students advice about different careers.

MATSUDA: He might want to become doctor or nurse or another profession, whatever that is, a carpenter. And then you are getting an AI mentor, like a nurse. That is almost like your aunt, your uncle who's a nurse, because your aunt, your uncle who's a nurse, knows you, knows your sports, your clubs, your grades, and can give you more personalized mentorship.

CHAKRABARTI: Matsuda knows AI is, of course, not always reliable. So he says the Anaheim district teaches seventh through 12th graders to have a healthy skepticism about where the information is coming from.

MATSUDA: What we're trying to do with the ethics of AI is for them to understand that the social media, all the stuff can be used against you and manipulate you.

I think kids are very capable of making, and they have to be. We have to teach them that. Otherwise, we live in a world where it's very dangerous in the world of AI. And kids need to have the skills and the ethical grounding to understand how AI can really enhance the human condition. Or it can undermine the human condition.

Kids need to have the skills and the ethical grounding to understand how AI can really enhance the human condition. Or it can undermine the human condition.

Michael Matsuda

CHAKRABARTI: So that's Michael Matsuda, superintendent of the Anaheim Union High School District in California. Linda Darling-Hammond, and I do want to touch briefly upon what I think is another specter that looms over this conversation. And that AI can be seen as a super charged way of not achieving much more than simply making, allowing us to make the same mistakes in education, but even faster. Or more efficiently.

And I think part of that problem is accelerated by the fact that ed tech is already a multi-billion-dollar industry in this country, and AI is like the newest, shiny thing that I can imagine there's a lot of FOMO with schools that they're just like looking out there and saying what tool can I buy?

What tool do I need to bring into the classroom? And that might entirely lead us down that wrong path that Rebecca was talking about.

DARLING-HAMMOND: Yeah, no, that is very true. And there aren't a lot of safeguards around, or consumer guidelines or any of those things around how to select and develop AI.

In fact, the tool that Mike Matsuda is using in Anaheim is dealt, developed with a non-profit organization that is not in it for the profit motive and is really trying to be responsive to schools, but that will not be the case everywhere. So I think that is a danger. There's also the danger that again, resources are very inequitably distributed across schools and districts in the United States.

And so the places where teachers are learning about how to use AI responsibly are more likely to be in affluent communities. And we have some data that show that in lower income communities, there's much less access to learning about this field, these tools, how to use them, and that means that the challenges and the potential problems are likely to be inequitably distributed as well.

CHAKRABARTI: Rebecca, do you want to pick up on that?

WINTHROP: Yeah. I think one of the most important things we have to remember about AI is it is unlike any other ed tech rollout we have seen. Number one, it is driven by commercial general-purpose companies, very rarely does some massive consumer facing tech have its sights on the education sector.

And education is absolutely a massive use case of generative AI. This past spring, there were massive, it's like a mattress sale with generative AI labs, trying to one up each other to get students to buy onto their products. You'll get a discount on the premium product for students if you sign up with, ChatGPT, no Grok, no Gemini.

And it's just continued since there, from there. And a lot of time. So that's one. Two, the curated ed tech experience. I really loved the clip you played from Anaheim. That's a very thoughtful teacher, really thinking deeply about the ethics and good, helpful use cases that advance learning.

Not undercut it, but most kids are accessing gen AI outside of school.

So we can't just focus on beautifully curated ed tech interventions, which is a piece of the positive path for sure. We have to have a society-wide, parents, educators, ed leaders, faith-based leaders, community service leaders. Anybody who cares about kids need to really work together this time around, versus when social media was rolled out and we all sat on the sidelines and took a wait and see approach. And really say, Hey, general purpose commercial AI companies, you have got to put in much stricter guardrails, right?

Because they say, oh, like ChatGPT, you're supposed to have parental consent between age 13 and 18 to open an account. But the truth is gajillion students just lie and make up their birthday and are using it. We all need to come together and find a way to make this technology be used in a way that is ethical, powerful, helpful, amplifying of the human spirit and condition.

CHAKRABARI: Here's one example that comes from an On Point listener. This is Christian in Florida, and he says AI has greatly changed how he interacts with his 11-year-old daughter when it comes to her homework.

CHRISTIAN: We will routinely use Google Gemini to not just give us the answer, but it will give us the breakdown.

And an explanation, and you can even ask it to give an explanation for say, a fifth grader or for a sixth grader to understand and to act like that's not a valuable tool is to be naive, because when we were growing up, we were told we wouldn't have calculators in our pocket. And here we are with very advanced calculators in all our pockets.

So AI is the next tool for these kids, and we might as well embrace it and learn how to use it properly.

AI is the next tool for these kids, and we might as well embrace it and learn how to use it properly.

Christian, parent

CHAKRABARTI: So that's Christian who listens to On Point in Florida. Rebecca, there's something else that I wanted to ask you that Linda has brought up a couple of times, and that is about the vast disparities district to district, in this country. There's always been this double-edged sword of education in America that it's the one ubiquitous, but hyper-local institution in America. But superimposed upon that is, are the sort of state regulations and of course, federal on top of that.

So schools at individual levels can try to change according to their ability. I'd say state and federal regulation lumber along much more slowly. But is there amplified risk of further divides being developed between well-resourced and not as well-resourced schools with AI? Or could possibly, and this might sound pie in the sky, could some of those less well-resourced schools maybe come out okay? Because they're not necessarily running towards that first flush of AI in schools.

WINTHROP: That is exactly what I have been seeing in the data. We are in the midst of a large Brookings global task force research project looking at the risks and the benefits of AI. And trying to figure out what we should all collectively do in the U.S. and elsewhere. And in the U.S. and in plenty of other countries around the world, there is a growing AI gap between well-resourced communities and low resourced communities.

In the U.S., you see this a lot with a rural versus urban cleavage, but you also could see it in places where you have, again, families, since kids so often access this tool, like the clip of the parent you talked about before, outside of school.

You get socioeconomic cleavages too where parents know about Gemini, are literate and maybe they're paying for Gemini Pro, right? And getting really good, top AI assistance and they have the time to be able to sit with their kid and model and scaffold and show how to use it well.

So the kid is actually learning. Versus families who don't have access to this sort of higher premium products, maybe are working a couple jobs, the kid is using it. You can revise your essay by social media, by the way. Kids get to this, ChatGPT is in a lot of places with wrappers, tech wrappers around it.

So they may have less support and handholding. So you are seeing a big divide, but I also agree with you. That's one of the things we're thinking about in our research. Is could it be there's a leapfrog opportunity here? Where the low resource communities are going to be able to move to a better spot with AI and education, because they won't fall into the same problems that the first gen AI and education communities fall into.

Think of it as akin as Sub-Saharan Africa leapfrogging to cell phones and mobile phones without having to lay landlines.

CHAKRABARTI: Yeah. I want to give voice to one more listener because she brings up something that both of you have talked about, and I promised to return to this, about sort of the fundamental purpose of schools and education in this country.

And part of that purpose, not just being academics, creating a new generation of citizens, so this is Maddie. She listens to On Point in Wilmore, Kentucky. She teaches music and music appreciation, and she's also a mom.

MADDIE: I want my children to be able to think critically and to think humanly, emotional intelligence, emotional maturity.

Those are things that AI can't give to us, and the obsession with being right or being accurate is not as important as the need to be humane and kind and gracious with one another.

CHAKRABARTI: Linda Darling-Hammond. I want to give you a minute to answer this here. Because I hear that and many parents, this is exactly what they want.

I would argue some of that has to come from home as well, not just in schools, but in talking about incentives to really shift focus to things like critical thinking and emotional intelligence. I still don't hear that our current system of education has those incentives built in.

DARLING-HAMMOND: Many schools are trying to build those in.

And you'll see across the country many schools that are trying to develop social and emotional learning. They're trying to create safer and more secure and more trusting environments. I think this idea of the child becoming a citizen of their family, their school community. And then the broader community is key to this.

We need to create venues in schools where students learn to be responsible and caring to all of their peers as well as in relation to their teachers. And there is a lot of work going on. But there are those who worry, if you take time for that, it will take away from the academics.

We don't have time for that sort of social and emotional learning. The data are very different. They show that when you help students become part of a community. First of all, when we are in a safe and trusting environment, we learn more effectively. That's how our brains work, how our neuron system works and the science of learning helps us understand why that's so important.

In a stressful environment, we learn less well because the cortisol gets in the way of all of the thinking that we have to do. In addition, when you teach kids how to understand their own feelings, collaborate well with others, manage their emotions and work through problems, they become more effective human beings and academic achievement goes up.

So those aspects of learning are a pathway to academic success and to life success, not a distraction. And we need to continue to help every school district understand that.

The first draft of this transcript was created by Descript, an AI transcription tool. An On Point producer then thoroughly reviewed, corrected, and reformatted the transcript before publication. The use of this AI tool creates the capacity to provide these transcripts.

This program aired on September 3, 2025.