Support WBUR

Gaming The Grade: How One Middle Schooler Beat A Virtual Learning Algorithm

When Lazare’s first history assignment of the virtual school year came back scored 50/100, a resounding F, the seventh-grader was crestfallen.

But when he told his mom how the grade appeared just moments after he’d submitted his answers, a series of short written responses, Dana Simmons knew immediately what happened, and she was outraged.

An algorithm had graded her son’s responses.

“Algorithms can't judge what learning history means,” says Simmons, herself a professor of history at the University of California, Riverside. “Learning history is not about submitting the correct word!”

Distraught but undeterred, Lazare tried again, this time intent on figuring out precisely what the algorithm wanted from him. Armed with tips from his mom — she suggested the program might give a higher grade to a longer response — his score soared to an 80.

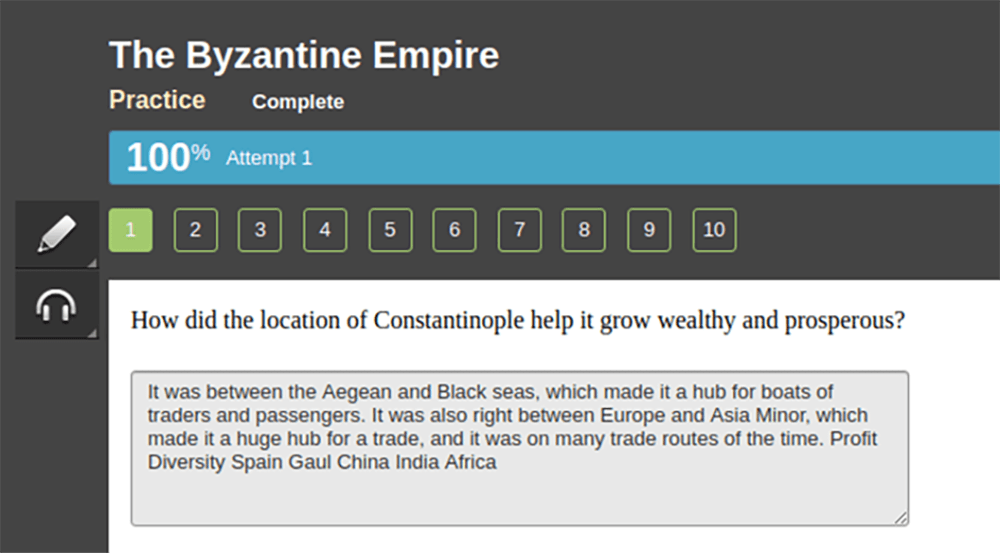

Fewer than 24 hours later, he cracked the code, earning a perfect 100/100.

The answer that appeased the algorithm? “It was about four lines, one paragraph, and one of the sentences is just a bunch of words at the end,” Lazare says.

Lazare and his mom fooled the software’s robo-grader by writing a couple of coherent sentences on the topic at hand — Constantinople’s prime geographical location in the Roman Empire — followed by a formless jumble of words that could be relevant: “profit, diversity, Spain, Gaul, China, India, Africa.”

Lazare adds that he’s still not 100% sure how to trick the program into giving him a perfect grade every time. “We'd have to do a bit more empirical testing,” he says with a grin. But he has a pretty good idea now.

“Essentially, what I think [the algorithm] does is it looks for certain words,” Lazare says authoritatively. “And, uh, compares [your answer] to a pre-written essay to see if it's correct.”

Emily M. Bender, a professor of computational linguistics at the University of Washington, says the scoring algorithm could be even simpler than what Lazare describes. It’s possible, even likely, she says, that the algorithm is primarily looking for the presence of specific keywords.

“Then you could also add in, you know, a ‘good’ answer is going to have at least two sentences and at least 20 words,” Bender offers by way of example. “These are very simple things to calculate.”

Straightforward doesn’t necessarily equate to useful, she points out.

“In the best case scenario, the point [of automatic scoring] is to give students feedback so that they can figure out what they need to learn more about,” she says. “But if students are using it for that purpose, they really need to be supported so they can understand the feedback.”

Edgenuity, the K-12 education service provider that Lazare used for his history assignment, told Here & Now in an emailed statement that it "does not use algorithms to supplant teacher scoring, only to provide scoring guidance to teachers." The company also says that major contributors to students' grades must be teacher-scored, while "open-answered" review questions, such as the ones Lazare answered, "aren't drivers of a student's course grade — but they may count for completion scoring as part of a larger activity."

As the coronavirus pandemic forces millions of students into virtual learning, schools across the country have widely adopted new virtual learning platforms such as Edgenuity. The company says it currently provides its products to more than 20,000 schools.

The Los Angeles Unified School District, where Lazare goes to middle school, has taught courses through Edgenuity for years, prompting questions from school board members about its efficacy. And robo-graders across the academic landscape have drawn criticism from experts who say they can be biased against certain demographic groups, and from teachers who point out the absurdity of grading writing by machine.

One Massachusetts Institute of Technology-affiliated researcher went so far as to write a computer program to spit out nonsensical essays that earn top scores on the GRE graduate school entry exam — a slightly more sophisticated version of Lazare’s bundle of words.

Simmons doesn’t blame her son’s teacher, or even the district, which she says has made a Herculean effort to accommodate its 600,000 students all learning from home. And as she prepares to teach a 200-person history lecture from her dining room, she understands that teachers need help.

“There is a place for educational technology,” she says. “But [Edgenuity] has packaged all the bad parts of teaching and stuck them together and sold them to districts as this, like, substitute for a teacher.”

Edgenuity does allow teachers to read answers and override automatic scores, which means Lazare’s hack isn’t foolproof. It’s also not clear to what extent Lazare’s assignment scores will factor into his final grade, Simmons says.

But she’s not worried about the grades. She’s concerned about the implications of her son’s experience. Lazare was able to draw on his parents’ expertise in history and educational technology. What about other kids without that support?

“From an F to a perfect score in 24 hours, and the disturbing thing is that there is no difference in his reading ability,” she says. “There is no difference in his ability to understand history. He’s now getting hundreds because of who his parents are.”

Lazare’s father, Emmanuel Saadia, chimes in to suggest that maybe programs like Edgenuity are no match for this new generation of learners, kids like Lazare who have been interacting with algorithms and the internet their whole lives.

“Most of the kids this age, they're extremely conversant in computers, and they know how to get around this or that roadblock,” Saadia says. “They have a leg up already.”

But Simmons interjects.

“No, some kids have a leg up,” she says. “And that’s the problem.”

This article was originally published on September 03, 2020.